The following is taken from The Barometer Handbook By David Burch. All references are to that text.

Recalling that the vertical rate of pressure change is always thousands of times higher than the horizontal rate that creates the wind and weather we care about, it is easy to see that observed pressures at various elevations must be carefully normalized to sea level if we are to learn about the true pressure pattern at hand.

In this section we outline how meteorologists determine sea level pressures from the reports they receive from varying elevations. We do not have call to do this ourselves very often, but the procedures are here if you care to. To be more precise, this is how meteorologists used to do it, based on procedures specified in detail in the Manual of Barometry (WBAN). These procedures give some insight into the physical factors that contribute to the reduction, but in practice today they use a much more empirical method, covered at the end of this section.

Step one is to clarify the concept of sea level pressure at, for example, a high plateau located inland, far from the sea—or even far from anywhere whose elevation might be near sea level. This is certainly an abstract concept, but one that is needed to normalize the observations.

The procedure is to imagine a large hole in the ground at the elevated station that reaches down to sea level. Then the question reduces to estimating what the pressure would be at the bottom of this hole based on the pressure we read at the elevated station level, along with the temperature and dew point of the air at the station level.

We know the weight of the air from the station level on up to the top of the atmosphere. That is just the station pressure we observe. So the problem reduces to figuring out how much the fictitious air column weighs in the fictitious hole.

An easy way to approximate the answer is to assume the air in the hole behaves exactly like the International Standard Atmosphere (ISA). Then we can just go to Table A2 and look up the answer. For example, consider being at an elevation of 1,200 feet above sea level. From Table A2 we see that this elevation corresponds to a pressure drop of 43.2 mb in the standard atmosphere. So if our actual station pressure were 985.5 mb, we would estimate that the pressure at sea level was 985.5 + 43.2 = 1028.7 mb.

This approximation assumes the air in the hole has exactly the average properties of the standard atmosphere. This is unlikely to be true, and we could even know this ahead of time by comparing the station pressure and temperature with the standard atmosphere values at our elevation. We can improve on this ISA approximation significantly, but it takes some number crunching to do so.

The weight of the air in the hole depends on the density of the air, which in turn depends on the average temperature of the air column as well as the moisture content—the ISA assumes dry air (relative humidity = 0%). For a better estimate of the weight of the air column, we need a better estimate of the average temperature of the air column. A complicating factor is the amount of water vapor in the air. This not only changes the density of the air directly, it also affects how the temperature changes with increasing elevation.

The standard way to simplify these calculations is to define the “virtual temperature” (Tv) of moist air as the temperature that dry air must have in order to produce the same pressure and density the moist air has. The definition is illustrated in Figure A3-1.

We can then study the properties of a column of moist air as if it were dry air by replacing the average temperature with an average Tv. The formula for Tv depends on the station temperature, pressure, and dew point. In principle, each equation in Chapter 9 on altimetry that contains a T, should have that T replaced with Tv for the most accurate results. We will calculate this Tv in a moment, but first a more basic practical matter.

We will need a measurement of the station pressure if we are to find the sea level pressure. If you have actually measured the station pressure yourself, then you are done. That is the one you will use. But if you are testing this procedure of reducing station pressure to sea level pressure by analyzing data from another location, you still need the station pressure at that location, but you will soon learn that information may not be available. With the exception mentioned at the end of this section, station pressures are rarely reported. What they do, instead, is automatically reduce the station pressures to sea level pressures and report those. All airport reports, however, always compute the altimeter setting, discussed in Chapter 9. The reports are called “Metars,” derived from a French phrase meaning weather reports from airports.

Altimeter setting, by definition, depends only on the station pressure and elevation of the station, so we can unfold the altimeter setting (AS) to get the station pressure (Ps) we need from the equation:

Ps =[AS0.1903 - (1.313 x 10-5) x H]5.255,

where H is the station elevation in feet. This is the hypsometric equation with the temperature replaced with the ISA lapse rate. AS is given in inches of mercury, so Ps will be inches of mercury as well, but we can convert to mb as:

Ps (mb) = 33.864 x Ps (inches)

The above two equations are not from the WBAN procedures, but taken directly from NWS computer code. I apologize for the mixed units necessary if we use the exact equations presented in both methods.

Once we have the station pressure, we can proceed with the WBAN procedure by computing the virtual temperature of the air. Start with finding the vapor pressure of the air (e) in mb from:

e = 6.11 x 10E

where e is in mb,

E = 7.5 x Td/(237.7 + Td),

and Td is the dew point of the station air in °C. Then we can find Tv in °K from:

Tv = (Ts + 273.15)/[1 - 0.379 x (e/Ps)]

where e and Ps are in mb, and Ts is the station air temperature in °C. The factor of 0.379 is the ratio of molecular weights of water to air.

The Ts, as always, takes special care. It is the temperature of the air at the station elevation, but not at the time the station pressure was measured. This Ts should be the average of the temperature at the time of the pressure measurement and the temperature at the station 12 hours earlier. Add the two and divide by 2. It has been found over the years that this accounts for the small, but detectable diurnal variation of the pressure (Table 5.6-1). This whole process is an attempt to do the best at a difficult task, so every factor counts.

Once we have Tv at the station level, we need to figure the average Tv in the fictitious air column. At this point we fall back on the ISA for an estimate of how the temperature changes in the fictitious air column. To find the mean virtual temperature (Tmv) in °K use the ISA lapse rate to get:

Tmv = Tv + [273.15 + 0.0065 x (H/2)].

Now we rewrite the hypsometric equation from Chapter 9 for the sea level pressure P1 = Psl, P2 = Ps, with Z1=0 and Z2 = H = height of the station in meters as:

Psl = r x Ps,

where

r = exp[ H / (29.28980 x Tmv)].

r is a fraction with no units, called the “pressure reduction ratio.” H must be in meters and Tmv in °K. Recall °K = °C + 273.15°.

This can be thought of as the basic solution. As an example, check data from Table A2, such as H = 600 m, Ts = 11°C (in dry air Tv=Ts), with Ps = 942.1 mb. Then you should find that Psl = 1013.25 mb, since we used the ISA values. Change Tv to 2°C to get 1015.6 mb or use 20°C to get 1011.0 mb. If you assume the relative humidity of that 20°C air is 75%, then the dew point is 15.4°C, and this will yield Tv = 22.1°C, which in turn would imply Psl = 1010.5 mb. The humidity correction is more important in warm air than in cold.

This basic solution is the one generally used for stations below 50 meters elevation in the WBAN procedure. For higher stations two more corrections are made. First the height H is converted to a geopotential height (Hgp), because the weight of the air depends on gravity, and the strength of the gravitational force varies with latitude and with elevation. This is a very small effect, but it can adjust a high elevation by several meters, which could have an effect on the pressure that is larger than what the humidity does. Samples of geopotential corrections are given in Table A3-1. It is made up of two terms. The latitude factor increase H with increasing latitude, whereas the elevation factor decreases it with increasing elevation.

Finally there is what is called the “Plateau Correction” to the temperature, which can be a significant correction of up to 10°C or more to Tv, leading to large changes in Psl for high elevations in extreme temperatures. The correction was first proposed by William Ferrel in 1886, which is more evidence of his genius. His reasoning and reckoning still apply today, though there have been improvements to this overall process since then.

Ferrel noted that average summertime sea level pressures deduced at high elevations were too low, and average wintertime sea level pressures were too high, compared to averages from around the country determined at lower elevations. When deduced at high elevations, the summer-winter difference in average sea level pressures was about 10 mb higher than from stations closer to sea level. In other words, he noted an effect that was obviously caused by the land within a process that was supposed to remove the effects of land. And so a correction was called for.

He concluded that the effective lapse rate must be different when the high land is present from what it would be if the land were removed. In short, the practice of using the ISA lapse rate for the fictitious air column was not right, and the seasonal average sea level pressure differences gave him a way to estimate a correction.

He formulated his correction to be applied to the sea level pressure itself as:

Correction (mb) = 0.064 (Ts-Tn) ( H/1,000),

where H is elevation in feet, Ts is the station temperature, and Tn is the annual average temperature at the station, both in °C. Thus an air temperature that is 20°C higher than the average temperature at an elevation of 5,000 ft would add 6.4 mb to the sea level pressures. This correction smooths out the seasonal differences seen in average sea level pressures across the land.

By 1900 it was recognized that this correction could be improved by reformulating it in terms of adjustments to the lapse rate itself, yielding a more accurate mean virtual temperature. In modern times, each weather station over 50 meters high reporting sea level pressures has its own Plateau Correction factor it uses to optimize the reduction to sea level. Samples are presented in Table A3-2 for stations above and below 1,000 ft elevation.

The Plateau Correction is called F(s) as a reminder that it depends on the station. It is applied to Tmv as:

Tmv —> Tmv + F(s).

Ferrel had developed one of the first ways to decide if the “sea level pressures” over elevated lands were correct. He also looked, as others did and still do, at neighboring stations that might be at lower elevations to compare their sea-level results to seek a uniform flow of the sea-level isobars.

Another evaluation used today is to plot out the sea level isobars predicted by the sum of all the station reports, and then compare the wind speeds and directions they predict with what is actually observed. In one sense, this is the ultimate test. We want the isobars so we can predict the wind, and if we do get isobars that predict the wind properly then we are doing a good job of measuring and deducing the isobars.

In modern meteorology there is still another crucial way to evaluate the reduction process and that is to compare the measured isobars with those predicted by any of several computerized atmospheric models. The models predict many properties of the atmosphere, at many levels of the atmosphere, not just at sea level. To the extent these other predicted properties agree with the observations, we want the predicted isobars to agree with observations as well.

If a model, for example, reproduced the isobars and other properties of the atmosphere over low lands very well, but over high lands or steep slopes the predicted isobars did not agree, but still other predicted properties of the atmosphere did agree, then we could consider that maybe the model is right and the way we are deducing the isobars in these difficult regions is not yet optimized. In short, the interplay between model predictions and deduced sea level pressures is yet another way to evaluate the process, and one that is actively pursued at present.

Figure A3-2 shows samples of how the station pressure reduction constants might be evaluated with model computations to get the most useful set of sea level isobars.

Sample Pressure Reduction

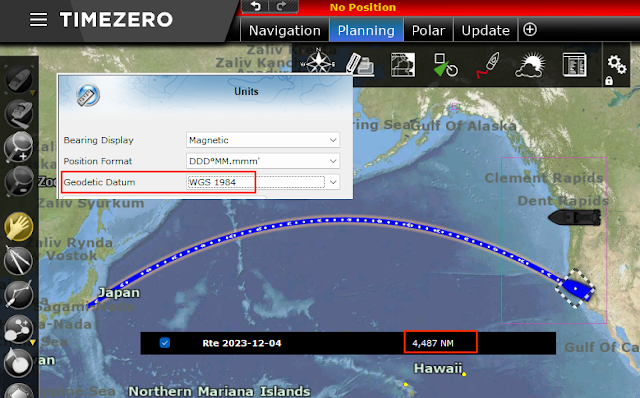

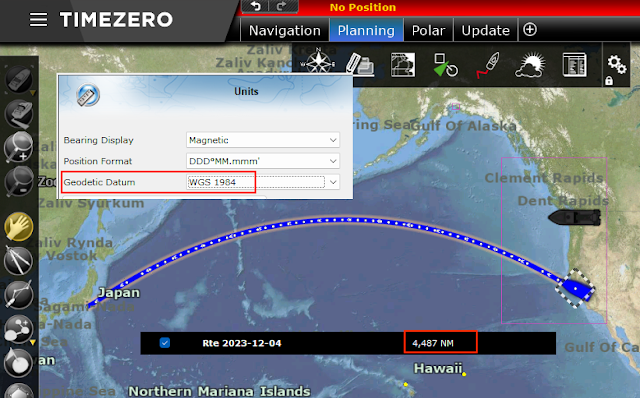

KCOS is Colorado Springs, CO, station elevation 6171 ft (1880.9 m), latitude = 38.8°N gave this Metar report: “101554Z AUTO 05005KT 10SM SCT020 OVC029 08/03 A3017 RMK AO2 SLP194 T00830028 TSNO. Observed 1554 UTC 10 May 2009, Temperature: 8.3°C (47°F), Dewpoint: 2.8°C (37°F) [RH = 68%], Pressure (altimeter): 30.17 inches Hg (1021.8 mb) [Sea level pressure: 1019.4 mb]”

The question is, how did they get the reported sea- level pressure of 1019.4?

WBAN Procedure

Step (1). Find the reported station temperature from 12h earlier, which is: Observed 0354 UTC 10 May 2009, Temperature: 9.4°C (49°F), and from this figure the average station temperature. Ts = (9.4+8.3)/2 = 8.9°C = 48°F.

Step (2). From the altimeter setting (30.17) and elevation (6171 ft), find the station pressure Ps = 24.03” = 813.8 mb.

Step (3). From Ps (813.8 mb), Ts (8.9°C), and Td (2.8°C), find virtual temperature Tv = 9.8°C = 283.0°K

Step (4). From H = 1880.9 m (6171 ft) at Lat = 38.8 N and Table A3-1, find geopotential height Hgpm = 1880.5 m.

Step (5). From Hgpm (1880.5 m), Tv (9.8°C) find mean virtual temperature Tmv = 15.9°C = 289.1°K

Step (6). From Ts (8.9°C = 48°F) and interpolation of Table A3-2, find Plateau Correction F(s) = -7.4 F° = -7.4 x (5/9) = -4.1 C°. Note the correction is a temperature interval, not a temperature.

Step (7). From corrected Tmv (15.9 - 4.1 = 11.8°C) and Hgpm (1880.5m) find r = 1.2527, and using Ps = 813.8 we find Psl = 1019.4 mb.

This agrees with the Metar report, but the result is very sensitive to which values are rounded at which stage of the computation. Changes could lead to variations of ±0.2 mb. Multiple tests from various stations would have to be done to see how well this historic method compares to the modern method used in the U.S. NWS. Other nations use other procedures.

ASOS Procedure

Starting sometime around 1992, the NWS in collaboration with the Federal Aviation Administration and the Department of Defence initiated an Automated Surface Observations System (ASOS) to collect and distribute weather data around the country. The data are collected by high precision sensors and then evaluated and analyzed by software at the stations, which are then transmitted to the various agencies and made available to the public.

Atmospheric pressure measurements are of course a crucial part of the program. Each station includes multiple electronic pressure sensors, which are compared to each other continuously. From the measured pressure at known elevation, along with the temperature and dew point, the ASOS software computes: station pressure, pressure tendency, altimeter setting, sea level pressure, density altitude, and pressure altitude.

The station pressure and altimeter setting are determined from the sensor pressures independently, but they are related as mentioned earlier. Since they are computed independently, you will find times when the equation given does not relate them exactly as they are published. You can find station pressures, altimeter settings, and sea level pressures to practice with and compare at this link

http://www.wrh.noaa.gov/mesowest/getobext.php?table=1&wfo=lox&sid=KCOS

by changing the last 4 letters to the Metar of interest.To find the closest Metar to your location you can use www.starpath.com/barometers. To find the specifications of the station (elevation, location, ID, even accuracy!) go to (with correct metar):

https://weather.gladstonefamily.net/site/KCMI.

The ASOS procedures have simplified the WBAN procedure significantly, and after crunching numbers with the latter procedure for some hours it is easy to appreciate the virtue of this approach. They no longer use mean virtual temperatures or plateau corrections, but instead simply define the sea level pressure as

Psl = Ps x r + C,

where r is the pressure reduction ratio and C is the pressure reduction constant. A station will use either r or C, but not both. Typically stations below 100 ft would use C, in which case r = 1. C is then basically the ISA correction, perhaps adjusted to some extent for the location. It does not depend on temperature.

Higher stations use r values (C = 0) from a table of values stored in the local ASOS computer that are unique to that station. A sample for KCOS is shown in Table A3-3. Using this table, and Ts = 48°F,

Psl = 813.8 x 1.2526 = 1019.4 mb,

which is obviously easier to obtain than using the WBAN procedure—if we happen to know the official r factors. At least for now, these do not seem to be public information, so the WBAN method is the only guideline for making these reductions at arbitrary locations. Even with that, we must make some estimate of the Plateau Correction based on WBAN values.

See also our related note where we show it is important to use the 12-hr average temperature.